By 2026, context matters more than compute for AI applications. According to Redis 2026 Predictions, AI apps will fail without proper context delivery: agents will struggle not with reasoning but with finding the right data across fragmented systems. In-memory databases like Redis have emerged as context engines that store, index, and serve structured, unstructured, short-term, and long-term data in one abstraction—delivering sub-millisecond responses critical for real-time AI and analytics. Redis’s real-time context engine for AI positions Redis as the fast lane for the AI stack, combining vector search, hybrid search, and semantic caching for RAG and agent memory. The redis-py Python client provides vector similarity search, KNN, and hybrid queries so Python teams can build RAG and caching layers without leaving the language that dominates ML and data pipelines. This article examines where Redis stands in 2026, why context engines are critical, and how Python and redis-py power the in-memory foundation for Google Discover–worthy AI infrastructure coverage.

Why Context Engines Matter in 2026

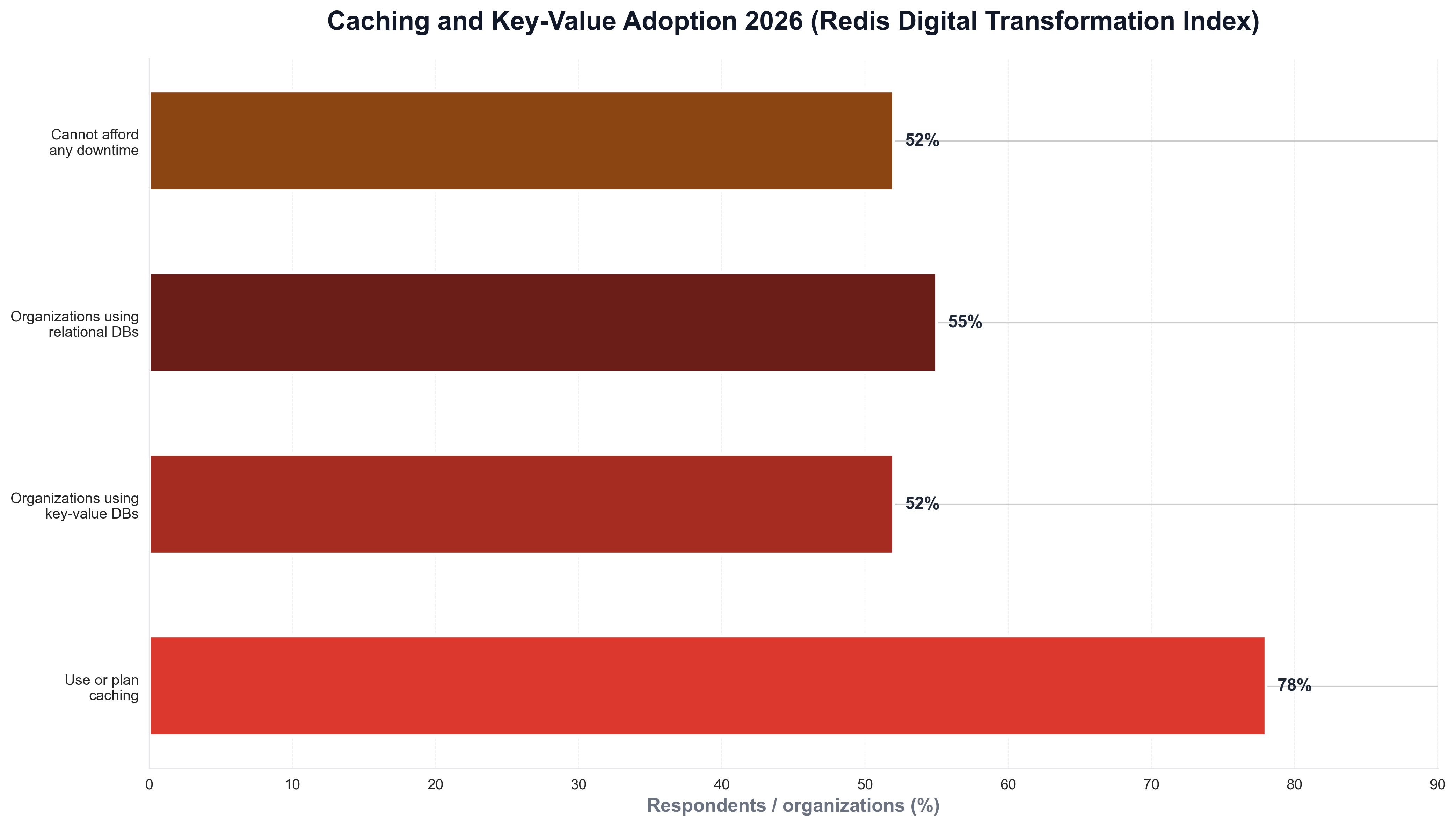

AI applications depend on relevant, concise context for every LLM call. Redis 2026 Predictions argue that the challenge is assembling the right context across vector stores, long-term memory, session state, SQL, and more—while avoiding sending too much data that increases cost and latency. Context engines solve this by offering a unified abstraction that stores, indexes, and serves all types of data in one place: less latency, fewer surprises, and seamless scaling. In-memory databases as the foundation of real-time AI explains that in-memory systems enable sub-millisecond responses essential for real-time AI and analytics; Redis maintains durability through persistence while optimizing cost with tiered storage (hot data in RAM, warm data on SSD). For Python developers, redis-py is the standard client: it supports caching (get/set, pipelines), vector search (KNN, range queries, hybrid search), and Redis Stack features so that Python apps can act as the context layer between LLMs and data. The following chart, generated with Python and matplotlib using Redis Digital Transformation Index–style data, illustrates caching and key-value adoption in 2026.

In 2026, Redis and Python together form the default choice for teams building RAG, agents, and real-time AI.

Redis as the Real-Time Context Engine for AI

Redis for AI describes Redis as the real-time context engine: high-performance vector search plus hybrid search (filtering, exact matching, vector similarity) to support retrieval-augmented generation and agent memory recall. Redis AI ecosystem integrations list integrations with LangChain, LangGraph, LiteLLM, Mem0, and Kong AI Gateway for vector storage, memory persistence, semantic caching, agent coordination, and intelligent request routing. RedisVL momentum reports roughly 500,000 downloads in October 2025 alone for Redis’s AI-native developer interface, signaling strong adoption. Python is at the center: redis-py and RedisVL (Python) let developers index vectors, run KNN and range queries, and combine vector search with text search and filters in a single API. A minimal Python example using redis-py for caching and vector-ready usage looks like this:

import redis

r = redis.Redis(host="localhost", port=6379, decode_responses=True)

r.set("user:1000:profile", '{"name": "Alice", "role": "admin"}', ex=3600)

cached = r.get("user:1000:profile")

# Use redis-py vecsearch for KNN: r.ft().search("*=>[KNN 5 @embedding $vec AS score]", query_params={"vec": query_embedding})

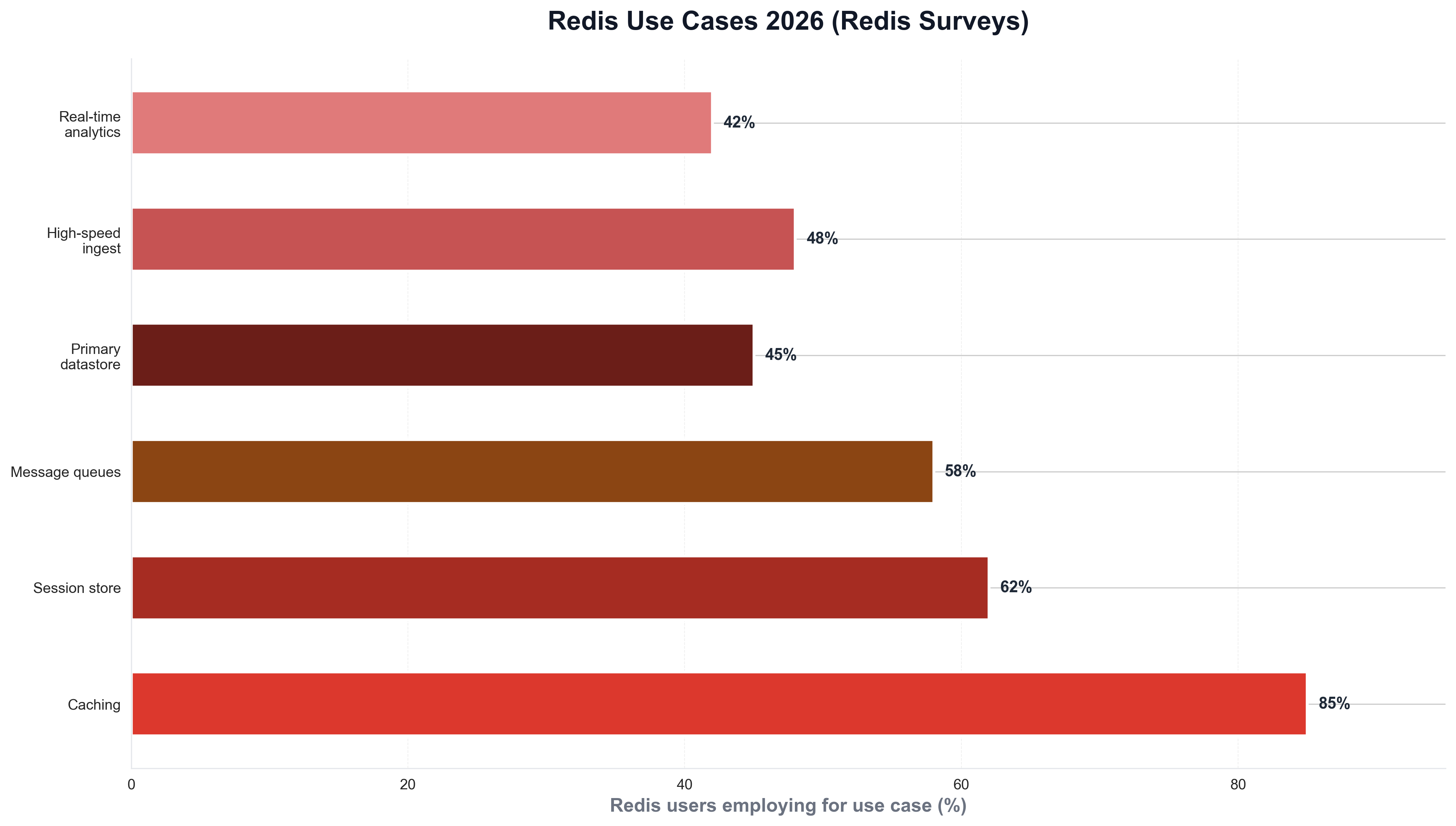

That pattern—Python for app logic, Redis for caching and vector search—is the norm in 2026 for RAG, semantic cache, and agent context without vendor lock-in to a single vector DB. The following chart, produced with Python, summarizes Redis use cases (caching, session store, message queues, primary datastore, high-speed ingest, real-time analytics) as seen in Redis surveys.

Redis Flex, Cost, and the 2026 Product Roadmap

Redis Cloud at AWS re:Invent 2025 and Redis fall release 2025 highlight Redis Flex, now generally available, offering up to 75% cost reduction on large caches by letting users customize RAM and SSD mixtures. Redis LangCache and enhanced AI-specific tools expand the platform for GenAI applications. Introducing another era of fast reinforces Redis’s focus on speed and performance as the foundation of the AI stack. For Python teams, redis-py and RedisVL provide the client-side interface to these features: caching with TTLs and pipelines, vector indexes (FLAT, HNSW), and hybrid queries so that Python services can deliver context to LLMs with minimal latency. In 2026, Redis is not only a cache; it is the context engine that Python developers use to store, index, and serve the right data at the right time.

Vector Search in Python: redis-py and RedisVL

Redis vector search with redis-py and the redis-py vector similarity examples document index types (FLAT, HNSW, SVS-VAMANA), vector dimensions, distance metrics (COSINE, L2), and query types: KNN (top-k similar vectors), range/radius queries, and hybrid (vector + text + filters). Python developers use .ft().search() with dialect 2 and query syntax such as ***=>[KNN 5 @vector $vec AS score] to run vector similarity from application code. Redis vector search concepts and RedisVL query API describe filter expressions, runtime parameters, and cluster optimization so that Python apps can scale RAG and agent memory on Redis without rewriting for a different backend. In 2026, Python and redis-py are the standard combination for in-memory vector search and context delivery in AI stacks.

Learning Agents and Feedback-Driven Context

Learning agents with Redis explores feedback-driven context engineering for robust agent behavior: using Redis to store and retrieve context that improves over time based on feedback. This aligns with the 2026 prediction that context engines will be critical infrastructure—agents need persistent, indexed, fast context, and Redis provides it. Python is the primary language for agent frameworks (LangChain, LangGraph, custom loops); redis-py and RedisVL allow those agents to read and write context (session state, long-term memory, vector search results) with sub-millisecond latency. In 2026, Python developers building agents will standardize on Redis (or similar in-memory context engines) and redis-py for context delivery that scales.

Conclusion: Redis as the Context Engine Default in 2026

In 2026, Redis is the real-time context engine for AI. Context matters more than compute; in-memory databases deliver sub-millisecond responses for RAG, agent memory, and semantic caching. Redis Flex offers up to 75% cost reduction on large caches; RedisVL and redis-py give Python teams vector search, KNN, hybrid queries, and caching in one stack. Python and redis-py form the default choice for context delivery in GenAI applications—so that for Google News and Google Discover, the story in 2026 is clear: Redis is where context lives, and Python** is how developers build on it.